01.10.21

Statement on ethical considerations on autonomous weapons: not targeting people

By Richard Moyes

The latest session of states talks in Geneva on autonomous weapons, between 24 September and 1 October concentrated on discussion of the Convention on Certain Conventional Weapons (CCW) Group of Governmental Experts (GGE) Chair’s paper on “elements on possible consensus recommendations in relation to the clarification, consideration and development of aspects of the normative and operational framework on emerging technologies in the area of lethal autonomous weapons systems”, which will be taken forward towards the CCW’s Review Conference in December.

At that point, states will have a choice to make about how international work on this issue is taken forward. We believe that it is imperative that new international law is negotiated that includes prohibitions to rule out systems that can’t be meaningfully controlled, prohibitions on machines killing human beings, and positive obligations to ensure meaningful human control over all weapons systems that use the processing of sensor information to apply force automatically.

During the discussion on ethics that took place on Friday 1 October, Article 36’s Richard Moyes made a statement to emphasise the importance of recognising the ethical concerns around targeting people with autonomous weapons as countries move forward in their work:

Article 36 Statement to the CCW GGE on Lethal Autonomous Weapons Systems, 1 October 2021

Delivered by Richard Moyes

Thank you chair,

To comment briefly on the issue of ethical considerations. There is no doubt these additional paragraphs provide a strengthening of the paper and start to fill a gap that was previously present.

But rather than commenting on the wordings of these paragraphs I wanted mainly to highlight something that is still missing here, and that is a recognition that machines identifying people directly as targets raises distinct ethical concerns.

Machines of course do not see people as people, and we see in systems that would target people directly a form of machine processing that reduced people to objects, dehumanising them, blind to human dignity.

In other areas of society there has already been an acknowledgement that automated decision making that harms people requires limits and obligations.

We see the potential to move towards this processing of people to be victims of force as one of the most distinct challenges posed by autonomous weapons systems.

The ethical issues raised in this area are already perhaps recognised in some existing policy and practice. We note that sentry gun technologies that would target people are not currently, generally, used in an autonomous mode; we hear state denials of certain new drone systems being used autonomously against people; and we see in some national policy directives a distinction drawn between anti-material and anti personnel systems.

We call for a prohibition on systems that would target people directly. This is absent from the current paper. And we see such a prohibition deriving substantially from ethical concerns in conjunction with a precautionary orientation to existing legal assumptions and requirements, as well as a step that would help to avoid prejudice and bias and further pushing the burden of avoiding harm onto civilians.

We would like see a prohibition on the targeting of people reflected on the text. If the paper does not also acknowledge that systems that target people directly raise particular ethical concerns then this would be a significant shortcoming.

Thank you.

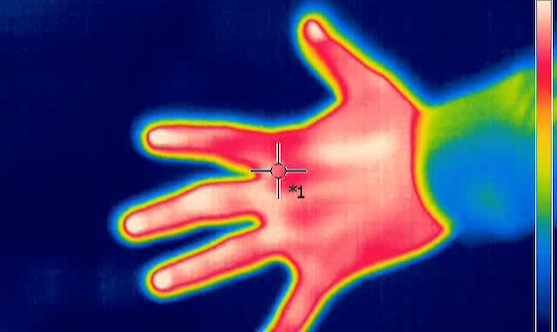

Featured image: Infrared thermography image of a hand (Jarek Tuszyński / CC-BY-SA-3.0, https://commons.wikimedia.org/wiki/File:Thermal_image_-_hand_-_1.jpg)